I’m on a major decluttering toot. When I realised that the filing cabinet I bought three years ago would no longer close with all the papers stuffed in it, I knew something had to change. I’ve been shredding like it’s Houston in 2001. I have the duplex scanner to suck in the stuff I need to keep. I’m moving to paperless wherever possible to stop it building up again.

My bank provides PDF statements. Of this I approve. PDF is almost perfect for this: it provides an electronic version of the page, but with searchable text and the potential for some level of security. Except, this is not the way that my bank does it. At first glance, the text looks pretty harmless:

Zoom in, and it gets a bit blocky:

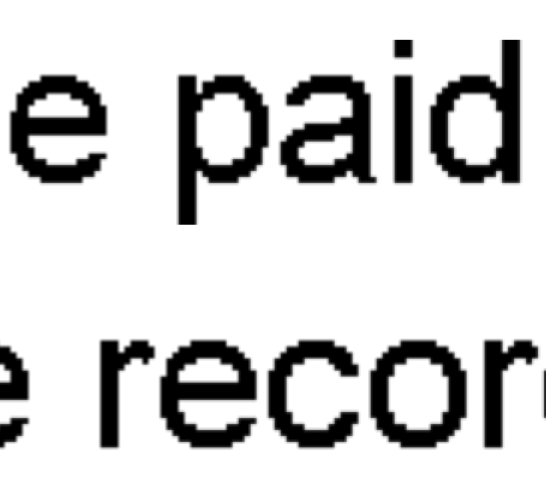

Zoom right in:

Aargh! Blockarama! Did they really store text as bitmaps? Sure enough, pdftotext output from the files contains no text. Running pdfimages produces hundreds of tiny images; here’s just a few:

![]()

![]()

![]()

![]()

![]()

![]()

Dear oh dear. This format is the worst of electronic, combined with paper’s lack of computer indexability. The producer claims to be Xenos D2eVision. Smooth work there, Xenos.

So, how can I fix this? It’s a bit of a pain to set this workflow up, but what I’ve done is:

- Convert the PDF to individual TIFF files at 300 dpi. Ghostscript is good for this:

gs -SDEVICE=tiffg4 -r300x300 -sOutputFile=file%03d.tif -dNOPAUSE -dBATCH -- file.pdf Run Tesseract OCR on the TIFF files to make hOCR output:

for f in file*tif

do

tesseract $f `basename $f` hocr

done

Update: Cuneiform seems to work better than Tesseract when feeding pdfbeads:

for f in file*tif

do

cuneiform -f hocr -o `basename $f .tif`.html $f

done- Move the images and the hOCR files to a new folder; the filenames must correspond, so file001.tif needs file001.html, file002.tif file002.html, etc.

- In the new folder, run

pdfbeads * > ../Output.pdf

The files are really small, and the text is recognized pretty well. It still looks pretty bad:

but at least the text can be copied and indexed.

but at least the text can be copied and indexed.

This thread “Convert Scanned Images to a Single PDF File†got me up and running with PDFBeads. You might also have success using the method described here: “How to extract text with OCR from a PDF on Linux?†— it uses hocr2pdf to create single-page OCR’d PDFs, then joins them.

Leave a Reply